Dedicated AI training capacity

A fit for organizations that have outgrown office-adjacent infrastructure and need a clearer path for dense GPU training environments, predictable thermal behavior, and disciplined commissioning.

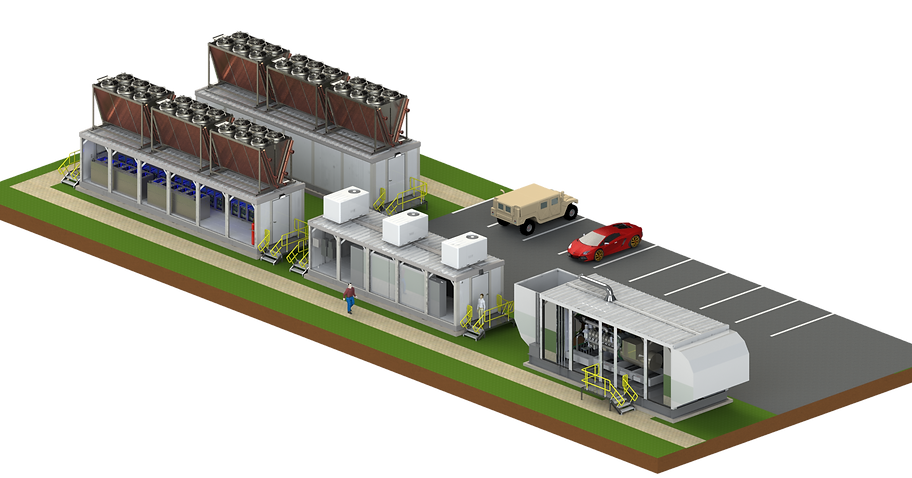

Deploy high-density AI capacity with a clearer operating model, disciplined commissioning, and long-term serviceability. The NOMAD platform is designed for dense AI workloads that need modular capacity, two-phase immersion cooling, and a more supportable path than improvised facility expansion.

Thermal architecture designed to support dense GPU environments with stable operating conditions.

Built for enterprise AI training and inference with repeatable deployment standards.

Add capacity in practical phases while maintaining serviceability and clear operating controls.

Observability and response workflows focused on uptime, performance consistency, and risk control.

This is typically the right conversation when a team has moved past ad hoc rack expansion and needs a cleaner facility strategy for dense AI operations, phased growth, and accountable uptime.

A fit for organizations that have outgrown office-adjacent infrastructure and need a clearer path for dense GPU training environments, predictable thermal behavior, and disciplined commissioning.

Useful when data-control requirements make shared platforms a poor fit and the project needs isolated capacity, operational ownership, and infrastructure built around private deployment rules.

A modular path matters when the project timeline, power delivery, or GPU procurement cycle is moving in phases and the team needs room to expand without rebuilding the entire operating model.

The platform is only one part of the project. The real work is aligning power, thermal strategy, facility operations, delivery sequencing, and the production handoff around how the compute will actually be used.

We scope the practical inputs first: power envelope, rack density, upstream networking, and what can be commissioned on the schedule the client is actually working against.

E3's NOMAD platform is designed around two-phase immersion cooling for dense AI infrastructure, which changes the thermal conversation from generic air management to a more deliberate density and serviceability model.

The value is not just capacity. It is how the environment is brought online, monitored, handed off, and kept supportable once the compute is in production.

A modular footprint gives teams a way to plan expansion around real workload growth, procurement timing, and operating cost instead of guessing everything up front.

The strongest AI infrastructure decisions connect facility design, GPU procurement, and production operations instead of treating them as separate buying motions.

Review the current sourcing path for Dell, Supermicro, and HPE GPU systems if the project also needs server selection and accelerator planning.

Read practical guidance on HPC procurement, immersion cooling, AI infrastructure operations, and private deployment planning before the build is finalized.

If the AI environment also needs monitoring, security controls, or integration with a broader IT operating model, review the MSP service path as well.

Send the power envelope, deployment timeline, workload mix, and expansion assumptions. We will map the next practical step instead of sending generic platform filler.

Answers to the common questions clients ask when they are evaluating a modular AI infrastructure path instead of expanding around an improvised facility footprint.

A modular approach is usually strongest when the project needs dense AI capacity, a phased expansion path, and a cleaner thermal and operating model than a piecemeal room retrofit can offer. It is especially relevant when the compute demand is moving faster than the rest of the facility.

Two-phase immersion changes the thermal design conversation for dense GPU infrastructure by supporting tighter thermal control and higher density than many traditional air-cooled layouts. The right fit still depends on the operating model, serviceability, and facility goals around the deployment.

Yes. The platform decision only matters if the full path is coherent. We help connect facility planning, GPU server sourcing, commissioning, network handoff, and the operating ownership that follows the initial deployment.

No. Capacity planning, cooling strategy, site assumptions, and deployment scope are all project-specific. We scope the environment against the workload and timeline first, then respond with the practical next step.

Share your power envelope, timeline, and workload profile. We will map a practical deployment path.

Request Consultation